I don’t have much experience with Android. I use iOS for my mobile device, MacOS and Linux for my computing, Roku for my TV. I briefly owned a Meta Quest VR headset, which ran some version of Android, but I didn’t get deep down into it. I’ve also done a very minimal bit of Android app development, but that was nearly ten years ago (and I only ever ran the apps on a simulator).

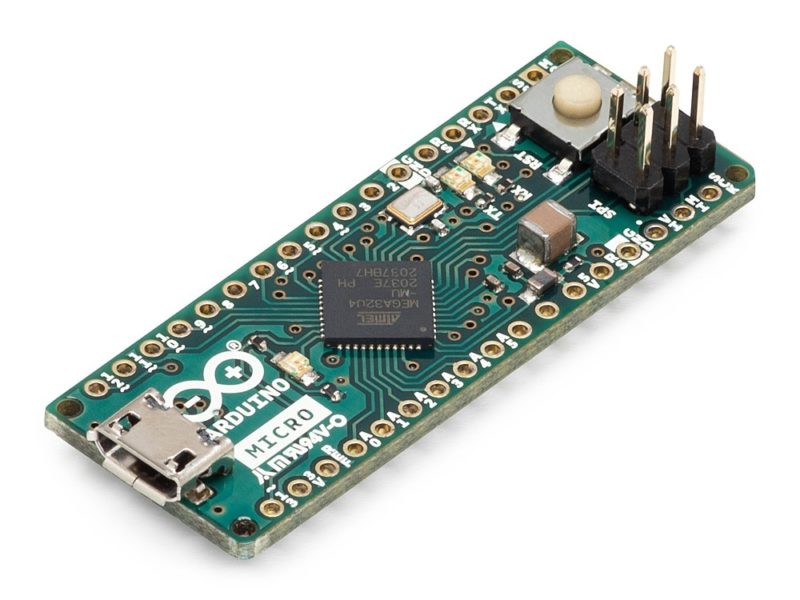

I’ve got a new hobby, though: home automation. I’ve replaced some of my overhead lights with Philips Hues, I’ve got a few fancy Zigbee dimmers with an extra button, and I’m working on some custom firmware for some cheaper wifi-enabled switches, but there are certain things that are a much better experience with a screen involved, especially when it comes to customization like adjusting the color of lights.

So I thought I’d look into getting a touch-screen based controller. Home Assistant has a very full-featured web view, so really any tablet with a web browser would work.

(more…)